Machine learning operations (MLOps) is quickly becoming a critical component of successful data science project deployment in the enterprise — it’s a process that helps organizations and business leaders generate long-term value and reduce risk associated with data science, machine learning, and AI initiatives. This post will briefly introduce MLOps and show how exactly businesses can leverage MLOps principles to mitigate AI project risks.

What Is MLOps?

MLOps is the standardization and streamlining of machine learning lifecycle management — it pulls heavily from the concept of DevOps, which streamlines the practice of software changes and updates. The two do have quite a bit in common: for example, they both center around:

- Robust automation and trust between teams.

- The idea of collaboration and increased communication between teams.

- The end-to-end service lifecycle (build-test-release).

- Prioritizing continuous delivery as well as high quality.

Yet there is one critical difference: deploying software code in production is fundamentally different than deploying machine learning models into production. While software code is relatively static, data is always changing. That means machine learning models are constantly learning and adapting (or not, as the case may be) to new inputs. The complexity of this environment, including the fact that machine learning models are made up of both code as well as data, is what makes MLOps a new and unique discipline.

MLOps Risk Assessment

MLOps isn't just for massive organizations with tens or hundreds of models in production; on the contrary, it's important to any team that has even one model in production. Depending on the model, continuous performance monitoring and adjusting could be essential. By allowing safe and reliable operations, MLOps is key in mitigating the risks induced by the use of ML models, However, MLOps practices do come at a cost, so a proper cost-benefit evaluation should be performed for each use case.

For example, the stakes are much lower for a recommendation engine used once a month to decide which marketing offer to send a customer than for a travel site whose pricing — and revenue — depends on a machine learning model. Therefore, when looking at MLOps as a way to mitigate risk, an analysis should cover:

- The risk that the model is unavailable for a given period of time

- The risk that the model returns a bad prediction for a given sample

- The risk that the model accuracy or fairness decreases over time

- The risk that the skills to maintain the models (i.e., data science talent) are lost

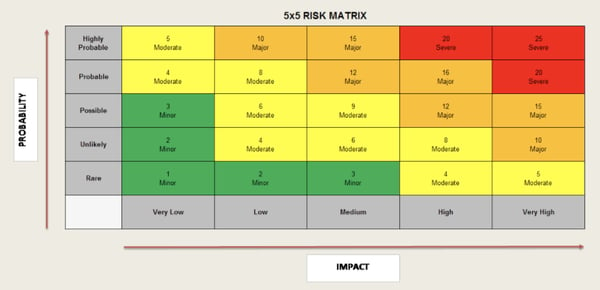

Risks are usually larger for models that are deployed widely and used outside of the organization. As shown in Figure 1, risk assessment is generally based on two metrics: the probability and the impact of the adverse event. Mitigation measures are generally based on the combination of the two, i.e., its severity. Risk assessment should be performed at the beginning of each project and reassessed periodically, as models may be used in ways that were not foreseen initially.

Figure 1: 5x5 Risk Matrix that may be leveraged for machine learning model risk and MLOps strategy.

Mitigating Risk with MLOps

MLOps really tips the scales as critical for risk mitigation when a centralized team (with unique reporting of its activities, meaning that there can be multiple such teams at any given enterprise) has more than a handful of operational models. At this point, it becomes difficult to have a global view of the states of these models without the standardization that allows the appropriate mitigation measures to be taken for each of them.

Pushing machine learning models into production without MLOps infrastructure is risky for many reasons, but first and foremost because fully assessing the performance of a machine learning model can often only be done in the production environment. Why? Because prediction models are only as good as the data they are trained on, which means the training data must be a good reflection of the data encountered in the production environment. If the production environment changes, then the model performance is likely to decrease rapidly.

Another major risk factor is that machine learning model performance is often very sensitive to the production environment it is running in, including the versions of software and operating systems they use. They tend not to be buggy in the classic software sense, because most weren't written by hand but rather were machine-generated. Instead, the problem is they are often built on a pile of open-source software (e.g., libraries, like Scikit-Learn, Python, or Linux), and having versions of this software in production that match those that the model was verified on is critically important.

Ultimately, pushing models into production is not the final step of the machine learning lifecycle — in fact, far from it. It’s often just the beginning of monitoring its performance and ensuring that it behaves as expected. As more data scientists start pushing more machine learning models into production, MLOps becomes critical in mitigating the potential risks, which (depending on the model) can be devastating for the business if things go wrong. Monitoring is also essential so that the organization has a precise knowledge of how broadly each model is used.