Once a data science project has progressed through the stages of data cleaning and preparation, analysis and experimentation, modeling, testing, and evaluation, it reaches a critical point. One that separates the average company from the truly data-powered one: it needs to be operationalized.

Operationali-what?

After significant time and effort has been invested into a data science project and a viable machine learning model has been produced, it’s not time to celebrate — at least, not yet.

In order to realize real business value from this project, the machine learning model must not sit on the shelf; it needs to be operationalized. Operationalization (o16n) simply means deploying a machine learning model for use across the organization.

In data science projects, the derivation of business value follows something akin to the Pareto Principle, where the vast majority of the business value is generated from the final few steps: o16n of that project. This is especially true of applications such as real-time pricing, instant approval of loan applications, or real-time fraud detection. In these examples, o16n is vital for the business to realize the full benefits of their data science efforts.

How you might feel when someone says the word "operationalization" (before reading this blog post + the guidebook, of course)

How you might feel when someone says the word "operationalization" (before reading this blog post + the guidebook, of course)

Key Challenges

Data teams face a number of challenges with machine learning model o16n. Typically, to get the model deployed, coordination is required across individuals or teams, where the responsibility for model development and model deployment lies with different people.

Once in production and running, however, teams then face the the problem of maintaining that model, tracking and monitoring its performance over time. In some cases, by the time a model is finally deployed it is already outdated as new, evolving data is coming in.

As the model is re-trained and the deployment is updated, keeping track of different model versions in various stages of development becomes an ever more demanding task - and this is just for one model. Now imagine if you had tens or hundreds?

Dataiku’s Solution

In order to tackle these issues as well as to help enterprises truly leverage and extract value from their data science and machine learning initiatives, Dataiku offers the Model API Deployer. The Model API Deployer is a user-friendly web interface that empowers data science teams to autonomously self-deploy, manage, and monitor their machine learning models at scale.

Core Principles of O16n

It is built upon what we believe are the core principles for effective o16n of artificial intelligence (AI) in the enterprise:

- The 360* View: A user interface that provides instant visibility into what is deployed and where, with deployment status, infrastructure health, and monitoring available at a glance.

- Versioning, Monitoring, and Auditing: The ability to examine the timeline of a model deployment: to find out when a model was deployed, who deployed it, and to which infrastructure. Setup user permissions and a workflow from development to test to production in accordance with how your business and team operates.

- Agility and Flexibility: The importance of being able to quickly deploy or rollback a version of a model in response to changing data or business needs (and for anyone on the team with the appropriate permissions to be able to do so). To be infrastructure agnostic, enabling deployments on-premises or to the cloud with Docker and Kubernetes so that teams can use the backend that is most suited to their needs.

- Being Truly End-to-End: Seamlessly integrating with data ingestion, preparation, and modeling. Maintaining the consistency and coherency of an entire data science project, where final deployments can be traced right back to the initial datasets and processes and actions that took place within the project.

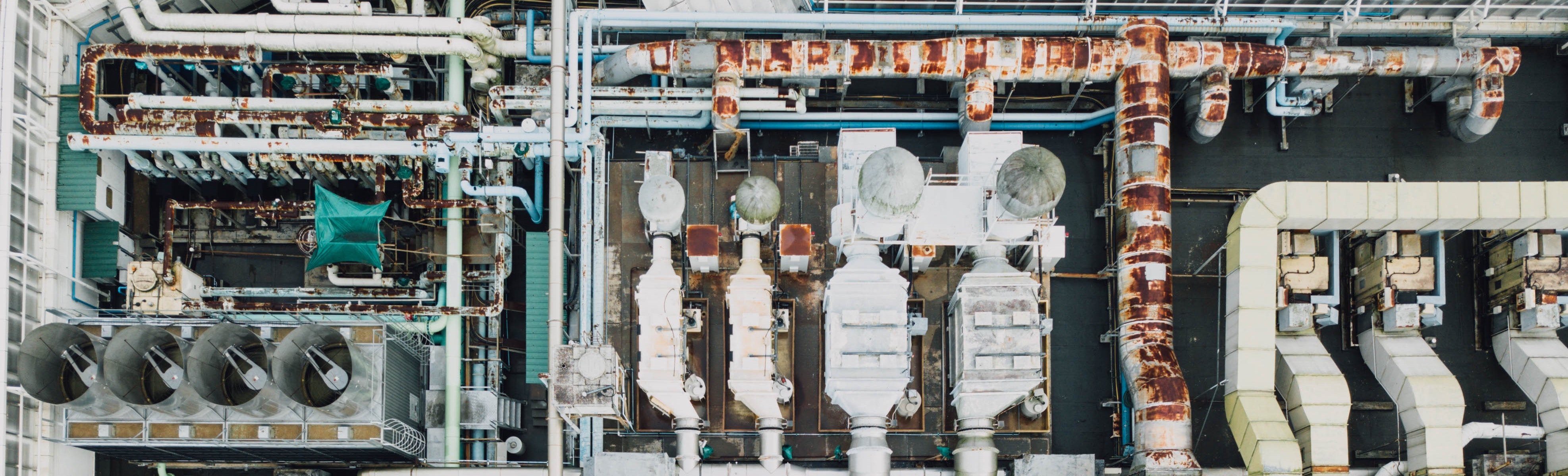

Flexibility + agility: just one of the core components of o16n

Flexibility + agility: just one of the core components of o16n

As data teams and projects grow in size and number, the challenges that organizations will face in their operationalization will also increase. The Model API Deployer solves many of these challenges by optimizing time to production, increasing collaboration and reducing cross-functional dependencies, improve reliability and reducing error and providing, at all times, visibility and traceability.

Read the technical documentation for the Dataiku API Deployer.