Alan Turing — British mathematician, World War II codebreaker, and computer science trailblazer — would have been 108 years old today. In this blog post, we highlight his key achievements and significant impact on the computer science and machine learning (ML) industry and anecdotally comment on what he may have thought of the state of Enterprise AI today.

Academic and Wartime Background

To begin, Turing’s genius is evidenced by his academic history — he studied mathematics at the University of Cambridge, pursued a fellowship at King’s College for his research in probability theory, and eventually received his doctoral degree in mathematical logic at Princeton.

He wrote a famous paper “On Computable Numbers, With an Application to the Entscheidungsproblem [Decision Problem]” which aimed to find a valid method for solving the fundamental problem of identifying which mathematical statements are provable within a given formal mathematical system and which are not. It was revealed that this decision method has no clear resolution, meaning no consistent formal system of arithmetic can solve, calculate, or compute every instance of the problem.

During his research for Entscheidungsproblem, Turing invented the Turing machine, a computing device that was designed to further investigate the extent and limitations of what can in fact be computed. Today, Turing machines are considered to be one of the foundational elements of computability and theoretical computer science and a precursor to the modern computer.

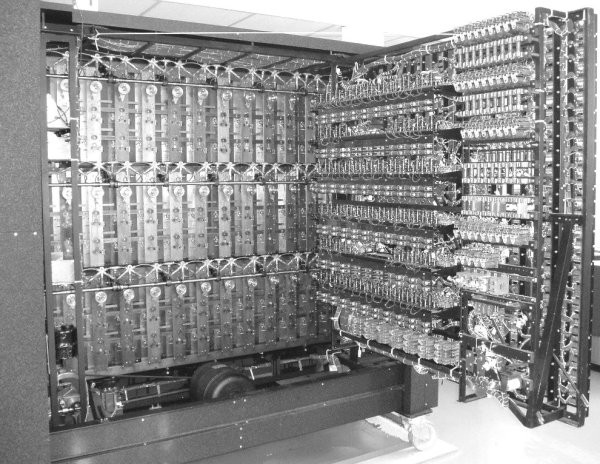

Further, Turing worked for the Government Code and Cypher School (GCCS), a British code-breaking organization. It was here that he made five major advances in the field of cryptanalysis, including specifically the Bombe, an electromechanical device used to help decipher Enigma, the main machine used by the German military to encrypt secret radio messages.

During this time, German submarines were hunting Allied ships carrying important cargo for the war. The Allied forces relied on Turing and the cryptologists at GCCS to decode messages to alter their course, so it is for this reason that the code breakers are viewed as playing a pivotal role in the Allied war victory. In early 1942, the team at GCCS is said to have decoded about 39,000 intercepted messages per month (a figure that rose to over 84,000 per month) and indicates two messages per minute, day and night.

Turing also developed a machine called Delilah that could securely encode a voice message, based on arithmetic, which could be used to scramble a radio or telephone conversation. It worked by combining the speech to be scrambled with what sounded like a random noise, similar to radio static.

This is Bombe, the machine designed by Turing during World War II to decipher German enigma codes. (Image Source )

What Would Turing Think of Enterprise AI Today?

In the biography “Alan Turing: The Enigma” by Andrew Hodges, Turing says, “The isolated man does not develop any intellectual power. It is necessary for him to be immersed in an environment of other[s]... The search for new techniques must be regarded as carried out by the human community as a whole, rather than by individuals.

While Turing himself was a genius, his work was not achieved alone — collaboration and teamwork was very important to him throughout his career, working with other mathematicians, engineers, and scientists. This notion of collaboration rings true with global data teams today, and Turing would likely approve.

Collaboration on AI projects between different people with different experiences, strengths, and educational backgrounds enables a myriad of positive outcomes, including but not limited to increased levels of transparency, visibility, and job ownership and responsibility. Further, different teams working together to achieve a common goal can eventually ladder up to the wider adoption of AI processes throughout an organization, completely transforming how teams work and driving more efficiency.

Further, Turing’s achievements in cryptography were developed during wartime to help maintain public safety and security and the emphasis on compliance and fairness still applies. Today, it is business critical for teams pursuing data efforts to have systems and processes in place that allow them to extract insights that demonstrate the true value of data without compromising individuals’ privacy. In order to achieve data minimization (where only the personal data needed for each project gets processed due to the proper separation of projects, anonymization, and pseudonymization wherever necessary), teams need to know which data sources are used where and which ones contain sensitive or personal information.

Data leaders also need to determine what “responsible” practices are, both in their organizations and in the context of AI. The guidelines should reinforce concepts like explainability, transparency, and inclusivity. Dataiku’s end-to-end platform, for example, is committed to supporting organizations in building an AI strategy that is responsible through accountability, sustainability, and governability. We aim to ensure models are designed and behave in ways that align with their intended purpose and do so in a way that is centrally controlled and managed.

Next, Turing’s work with the Delilah is highly connected to natural language processing (NLP), a branch of AI that deals with the interaction between humans and computers using the natural language. The primary objective of NLP is reading, deciphering, understanding, and making sense of the human languages, so Turing would likely be deeply interested in finding new subfields and applications for NLP, in addition to the growing use of things like sentiment analysis, speech recognition, and information extraction. In 1999, “Time” magazine named him one of its “100 Most Important People of the 20th Century,” citing that everyone who works at a keyboard, opens a spreadsheet, or works in a word-processing program “is working on an incarnation of a Turing machine.”

Turing’s impact on the state of ML today is vast and profound. While I think Turing would be proud of the great strides that have been made in data science, machine learning, and AI since his passing, I’m willing to bet he would admit that there is still a long way to go.