I recently launched my latest little side project called Human or Company: a web page where anyone can enter a Twitter username and instantly determine whether that username belongs to a person or a company.

In this post, I will share how I created the model behind the algorithm, the features that influence the classification, and how I built the site to respond to real-time requests. Although this is a low-key and modest side project, it is a great example of building a real-time prediction service with Dataiku, and it's a way of showing that trendy terms like machine learning or artificial intelligence can be used in simple and small (but effective!) ways.

Many people I meet are confused about real-time applications in machine learning. In the case of Human or Company, the model is prepared and trained in advance, and only the prediction is applied in real time. This is good and accurate enough in many cases, easy to maintain, and allows for assessment before going live.

By the way, if you want to learn more about real-time prediction powered by Dataiku… you have this tutorial or the full documentation.

The Idea

I am a product manager here at Dataiku, a user of Twitter, and I like to run data science models on a variety of datasets for work — but also for fun!

For a few months now, I had been thinking about building an open demo for real-time prediction capabilities. When I found a dataset by CrowdFlower from a Kaggle competition in 2015 containing approximately 20,000 Twitter usernames, I knew I could do something with it. The dataset had features about the typology (male/female/brand) of the users, and some other information. For my project, I made the decision to focus just on the classification of individuals versus non-individuals.

From the dataset, I kept only two columns: the Twitter username and the label (individual, non-individual). I wrote a Python script to enrich each Twitter user with their full name, bio, location, external link, number of Tweets, followers, followings and favorites. For my first attempt, I didn't want to make it too complicated; in the end, I ended up with a list of 14,000 users (probably because some users were deleted or blocked since 2015).

First Model

I quickly ran an initial predictive model to see if I could derive any interesting value from this dataset. For those unfamiliar, the idea behind this is to see if there is any pattern in the data (e.g., a word in the bio description, the numbers of Tweets, etc.) that indicates whether the Twitter account belongs to a human or a company.

The answer was yes!

The first model (using a logistic regression classifier) gave me an accuracy response of more than 80% and an AUC of 84%. The results were also meaningful — they showed that the probability of an account belonging to a company increases with the number of Tweets, likes, or followings. On the other side, if you’ve linked to an Instagram or LinkedIn account in your bio, you are more likely to be a human.

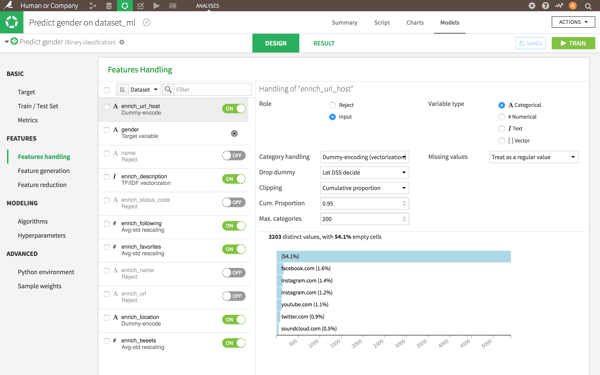

Features used for the modeling.

Features used for the modeling.

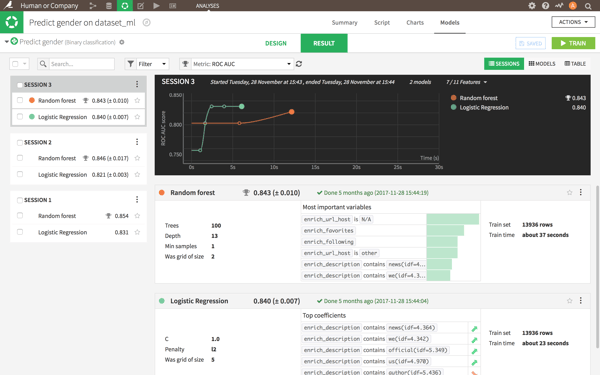

In terms of models, I only compared the results between the logistic regression and random forest. They were both very similar:

Models comparison.

Models comparison.

There is some room for improving the model. One promising work is the analysis of the profile image — a company very likely uses a logo, whereas an individual will tend to use a photo or an avatar. However, image recognition can be complicated and quite resource-consuming, so that could delay the real time prediction by a few hundreds of milliseconds. So I decided not to include it in the first version.

Building a Prediction API Service

After creating a machine learning model (like I did in the previous section), you can use it to predict new values. Put simply, you get a mathematical formula that you can later use to generate a score on any username.

So I picked some Twitter usernames that were not in the original dataset, I applied my enrichment function to get all the deviated variables, and I applied my model to get the prediction. There were some errors, but in general it worked very well.

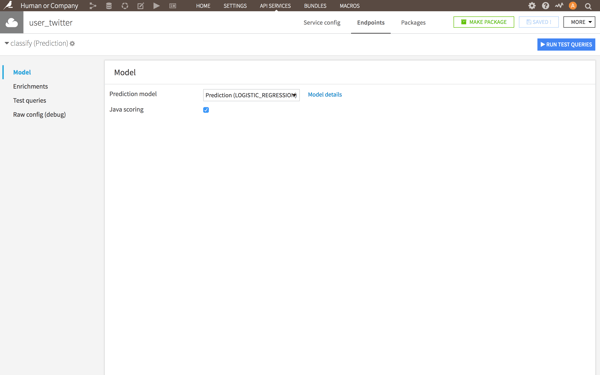

From there, I created an API service to automate the predictions. With Dataiku, that part is super easy. I created two functions (or endpoints), one for the model prediction, and one for the enrichment (getting the profile twitter infos) and combined them so they are available in a single call.

The screen to configure a Model Prediction API Service

The screen to configure a Model Prediction API Service

The next step was building the web page (with the help of my colleague JB for the design). Technically, the site is just an input field, a submit button, and some JavaScript code that sends the username to the API to get and display the result.

Launch

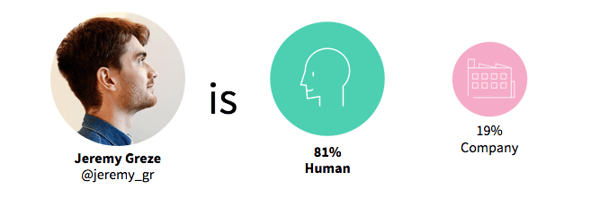

And that was it. This is now online at www.humanorcompany.com and you can give it a try to get a real time prediction!

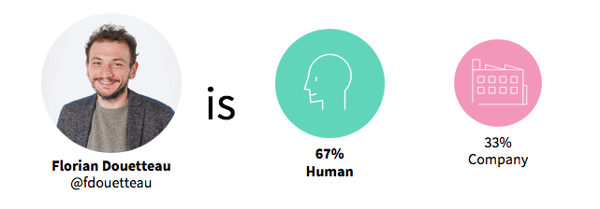

At least, it works for me. :)

And I'm more human than my boss. I'm not sure what that means :D

And I'm more human than my boss. I'm not sure what that means :D

I really like the fact that you can click on a button and get a predictive model result within a second. I hope that seeing this project will inspire others to think of more creative, bigger use cases for real-time prediction!

Feel free to contact me with any questions or feedback you might have! You can find me on Twitter and also read another post about this project on my personal blog.