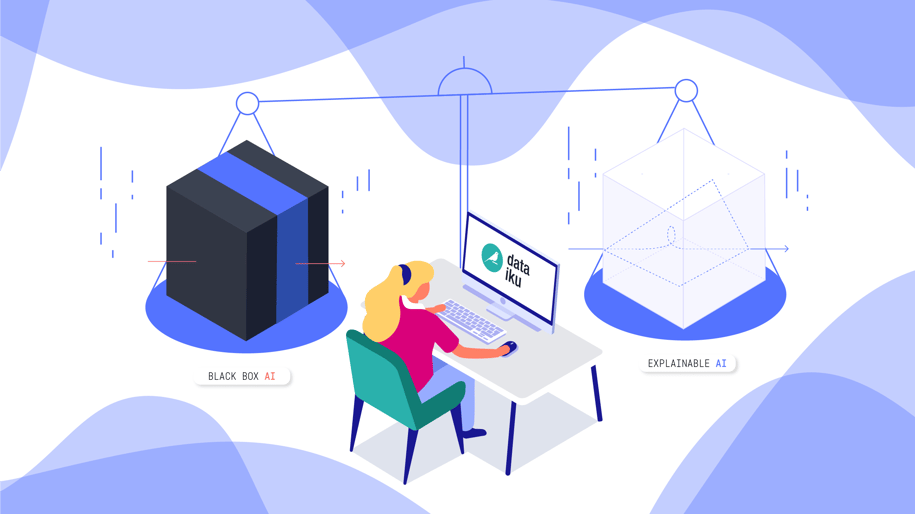

Data scientists and business leaders building or using machine learning models and AI systems face a serious challenge today — how to balance interpretability and accuracy stemming from the difference between black-box and white-box models.

The Challenge

We live in a world of black-box models and white box models. On the one hand, black-box models have observable input-output relationships but lack clarity around inner workings (think: a model that takes customer attribute as inputs and outputs a car insurance rate, without a discernible how). This is typical of deep-learning and boosted/random forest models which model incredibly complex situations with high non-linearity and interactions between inputs.

On the other hand, white-box models have observable/understandable behaviors, features, and relationships between influencing variables and the output predictions (think: linear regressions and decision trees), but are often not as performant as black-box models (i.e, lower accuracy, but higher explainability).

In the ideal world, every model would be explainable and transparent, useful for:

- Critical decisions (e.g., healthcare)

- Seldom made or non-routine decisions (e.g., M&A work)

- Stakeholder justification-required decisions (e.g., strategic business choices)

- High-touch human judgement decisions (e.g., portfolio manager-vetted investments)

- Situations where interactions matter more than outcomes (e.g., root cause analysis)

In the real world, however, there is a time and place for both types of models. Not all decisions are the same, and developing interpretable models is very challenging (and in some cases impossible - for example, modeling a complex scenario or a high-dimensional space, as in image classification). Even in less-complex scenarios, black-box models typically outperform white-box counterparts due to black-box models' ability to capture high non-linearity and interactions between features (think: a multi-layer neural network applied to a churn detection use case).

Despite higher performance, there are several downsides to black-box models. The first downside is simply the lack of explainability internally in a firm as well as externally to customers and regulators seeking explanations for why a decision was made (look at the case last year of a black-box algorithm that erroneously cut medical coverage to long-time patients).

The second downside to black-box models is that there could be a host of unseen problems impacting the output — such as overfit, spurious correlations, or "garbage in / garbage out" — that are impossible to catch due to the lack of understanding around the black-box model’s operations. Another downside of not spending enough time understanding the reality beyond the black-box model is that it creates a "comprehension debt" that must be repaid over time via difficulty to sustain performance, unexpected effects like people gaming the system, or potential unfairness.

Finally, black-box models can lead to technical debt over time whereby the model must be more frequently reassessed and retrained as data drifts because the model may rely on spurious and non-causal correlations that quickly vanish, ultimately driving up OPEX costs.

So How Does Dataiku Help Find the Right Balance?

Dataiku helps customers balance interpretability and accuracy by providing a data science, machine learning, and AI platform built for best-practice methodology and governance throughout a model’s entire lifecycle. The following features in Dataiku help users find the right balance between black- and white-box models:

- Collaboration: Dataiku is an inclusive and collaborative data science and machine learning platform democratizing access to data and enabling enterprises to build their own path to AI. By bringing the right people, processes, data, and technologies together in a transparent way, strategic decisions can be better made throughout the model lifecycle including tradeoffs between black-box and white-box models leading to greater understanding of, and trust in, model outputs.

- Data preparation and analysis: Dataiku offers data lineage (so you know where the data originated from); easy to use data transformation and cleaning (to ensure data quality); data analysis (to identify outliers and key data about the data); sensitivity analysis (enhanced in our latest version); business knowledge enrichment (through plugins and business meaning detection) and many other features.

- Machine learning models: Dataiku offers the freedom to approach the modeling process in Expert Mode or leverage AutoML to quickly and easily generate models using a variety of pre-canned white-box and black-box models, including logistic/ridge/lasso regression; decision trees; random forests; XGBoost; SGD; K-Nearest Neighbors, Artificial Neural Networks, Deep Learning, and more, as well as the ability to import your own notebook-based custom algorithms.

- Partial dependence plots: Helps model creators understand complex models visually by surfacing relationships between a feature and the target

- Subpopulation analysis: Weed out unintended model biases and create a more transparent and fair deployment of AI

- Model monitoring: Assess the drift on the data to be scored and assess when the model must be retrained, by comparing the new data with the model original test set to understand the drift factor using Drift score and Fugacity.

To learn more about how Dataiku can assist your organization on the path to Enterprise AI and help in striking the right balance between interpretability and accuracy, give the platform a try.